Product Management with GenAI: What Changes and What Doesn't

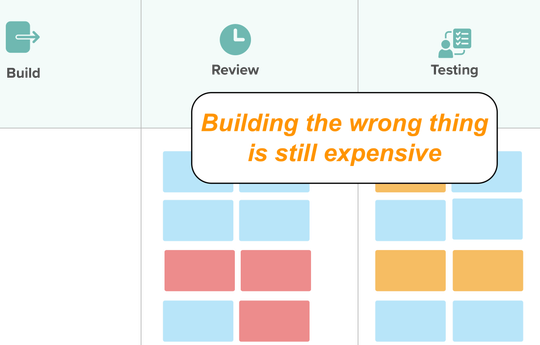

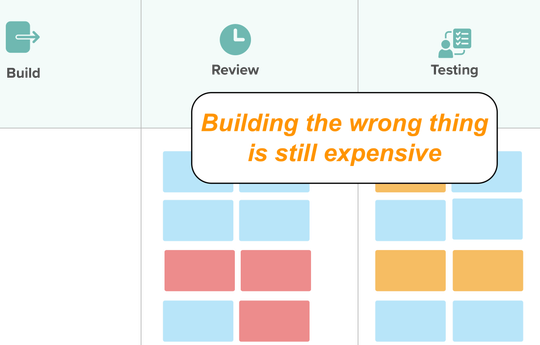

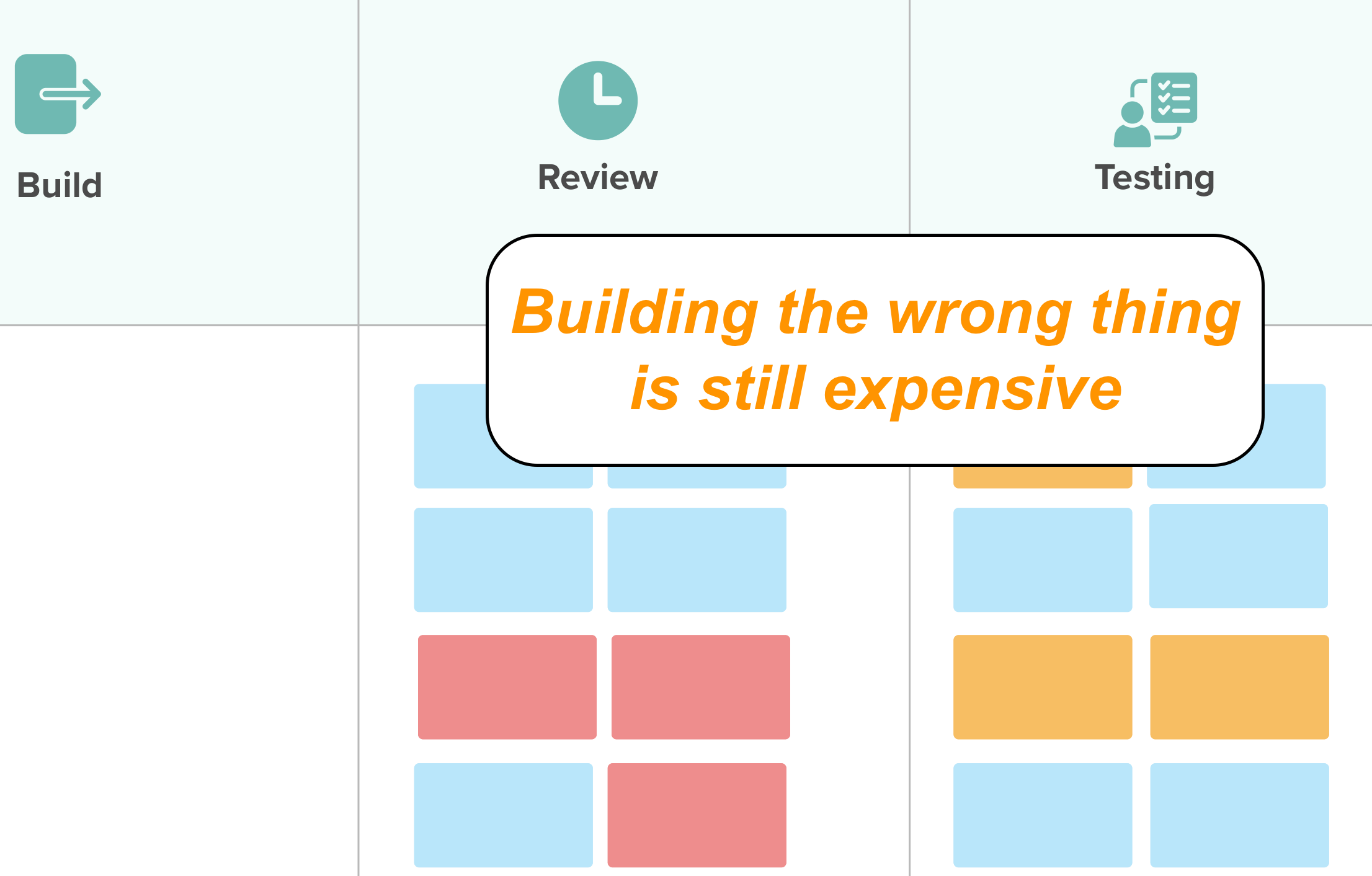

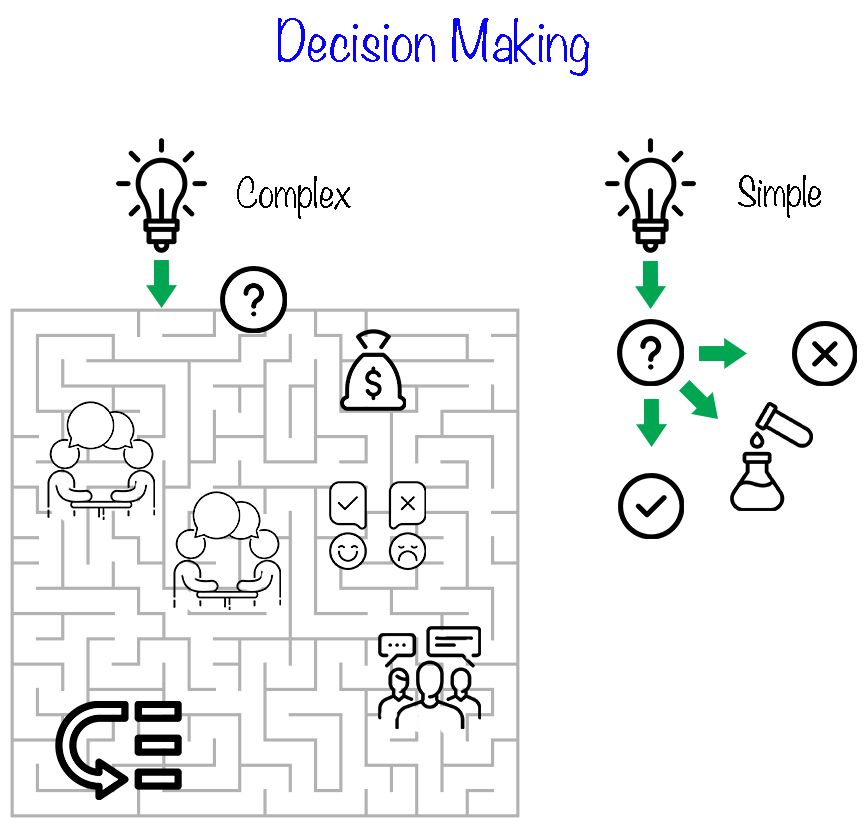

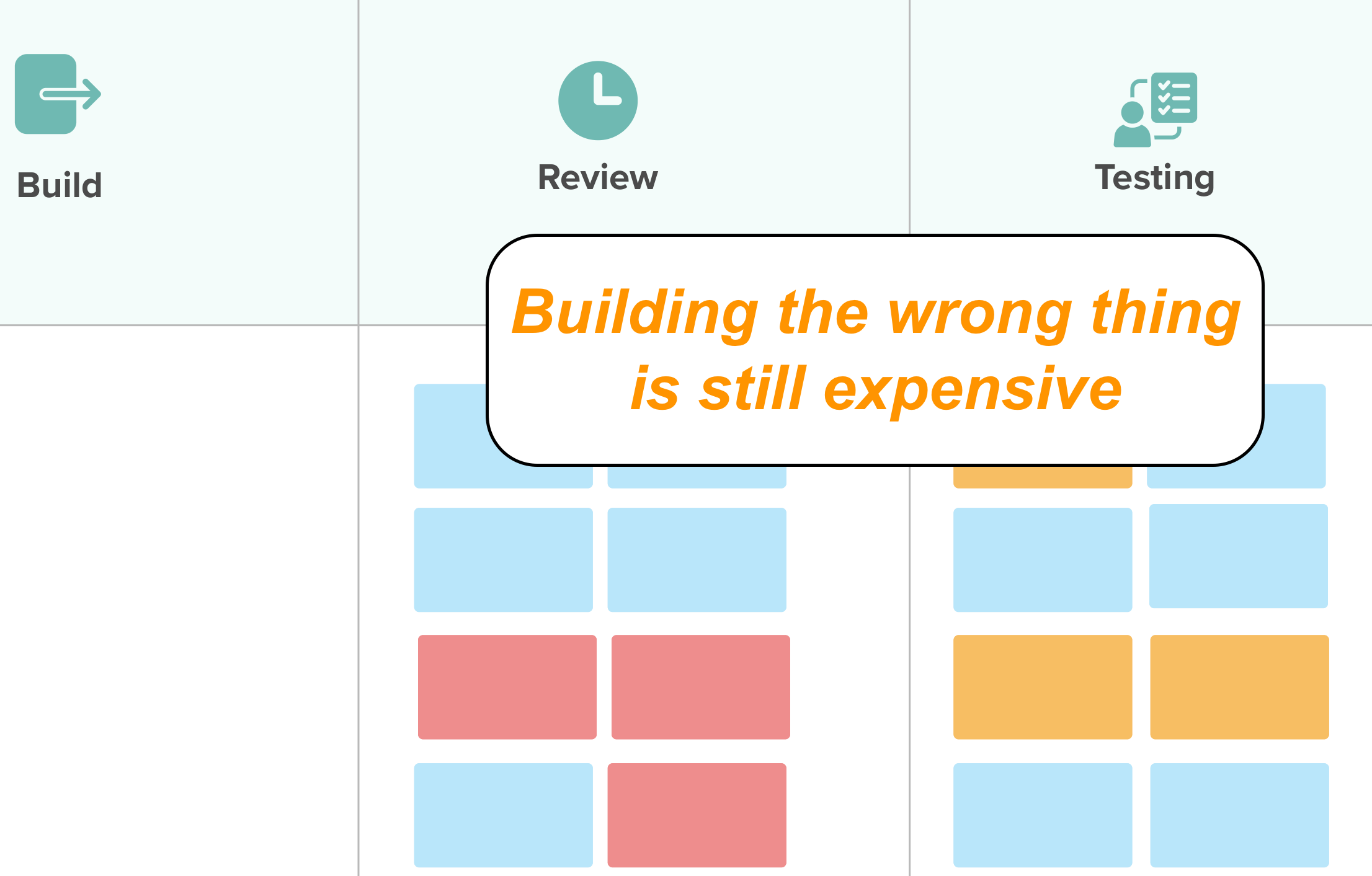

GenAI makes shipping cheap, not shipping right. Lean UX, Impact Mapping, and real-user experiments matter more when code is disposable.

Mark Levison has been authoring articles about Scrum and Agile for two decades, including various guides and handbooks, and multiple articles for InfoQ and the Scrum Alliance. This, his long-running blog, is regarded as a leading source of trusted information and practical advice for Scrum teams and individuals.

GenAI makes shipping cheap, not shipping right. Lean UX, Impact Mapping, and real-user experiments matter more when code is disposable.

Refinement drags on forever? 1hr feels like 3. Fix endless meetings, estimation debates, and unprepared teams using 5-min rules, Complexity Poker, and more

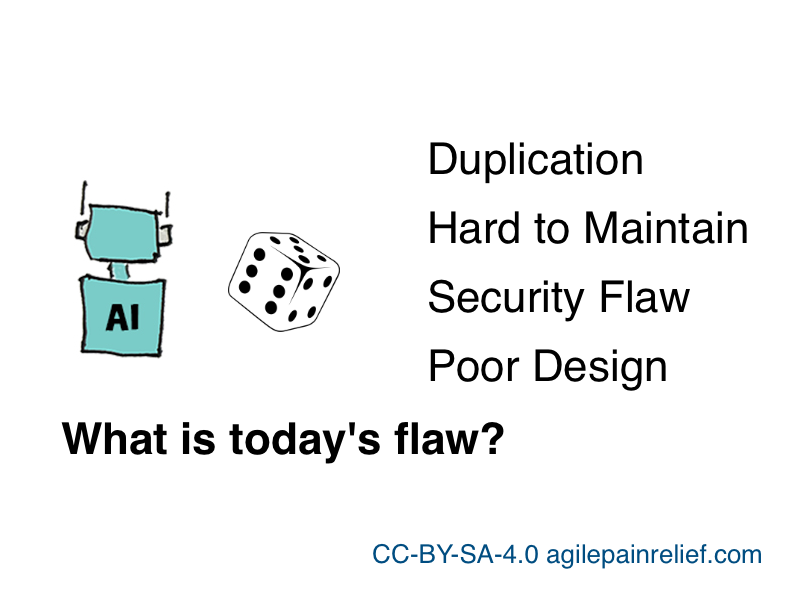

AI-generated code has 1.7x more issues and the flaws are structural, not fixable by code review. Why training rewards bluffing over quality, and what to do about it

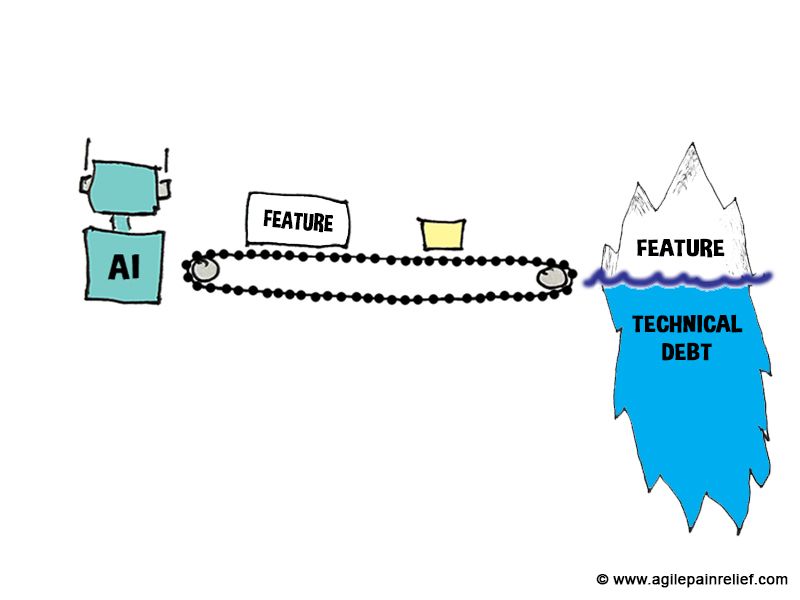

Research shows AI-generated code has 1.7x more issues than human code. Analysis of several studies reveals growing technical debt and complexity with GenAI tools.

GenAI helps you apply Systems Thinking to team problems. Understand the interconnected parts and avoid common traps like local optimization.

AI-generated code has 1.7x more issues and the flaws are structural, not fixable by code review. Why training rewards bluffing over quality, and what to do about it

Read full article

Research shows AI-generated code has 1.7x more issues than human code. Analysis of several studies reveals growing technical debt and complexity with GenAI tools.

Read full article

I tested AI code generation with the Tennis Kata. It outlined the basics, but the code was bloated, hard to read, and failed in a key case.

Read full article

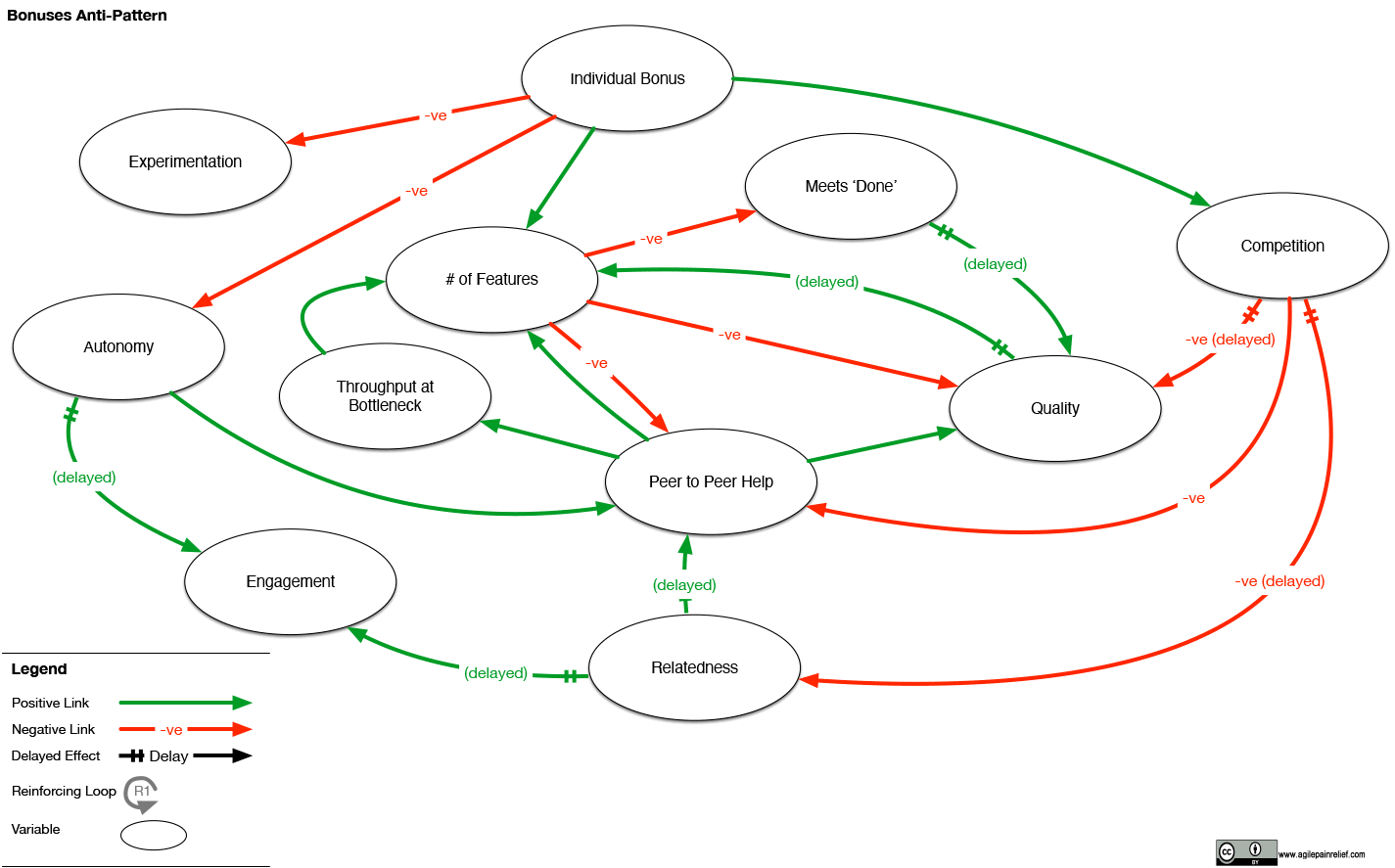

Bonuses might get more features now, but at the cost of quality, collaboration, and a sustainable system

Read full article

The more the manager takes control, the more decisions they make, the longer wait times for everything

Read full article

Hardening Sprints are one of the most common Scrum Anti-Patterns. They harm quality

Read full article

GenAI makes shipping cheap, not shipping right. Lean UX, Impact Mapping, and real-user experiments matter more when code is disposable.

Read full article

AI-generated code has 1.7x more issues and the flaws are structural, not fixable by code review. Why training rewards bluffing over quality, and what to do about it

Read full article

Research shows AI-generated code has 1.7x more issues than human code. Analysis of several studies reveals growing technical debt and complexity with GenAI tools.

Read full article

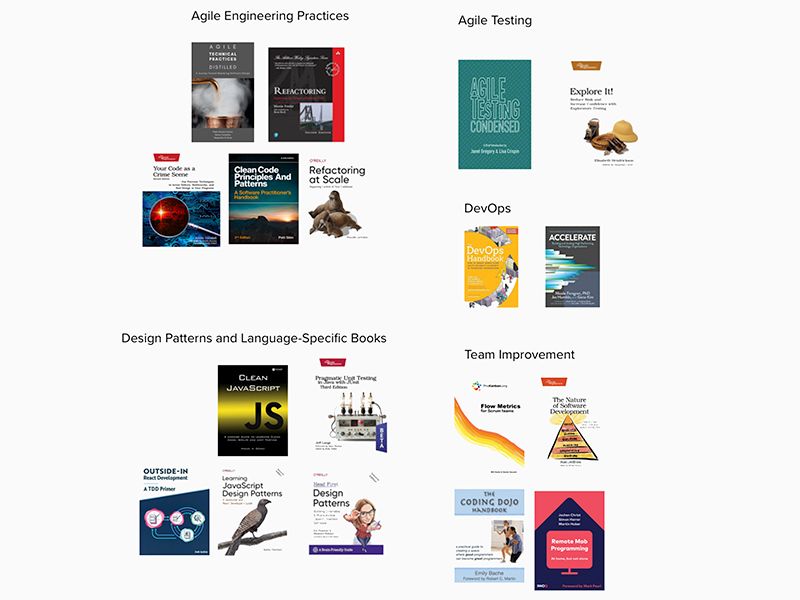

Reading resources for Scrum developers to help effectiveness and efficiency.

Read full article

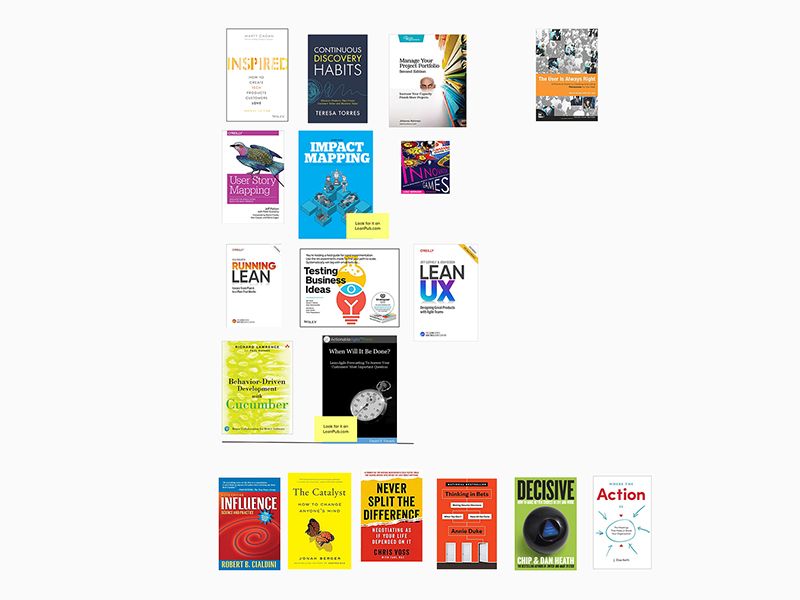

To be a good Product Owner, continued learning is essential.

Read full article

A good ScrumMaster knows that learning doesn’t begin and end with a workshop.

Read full article

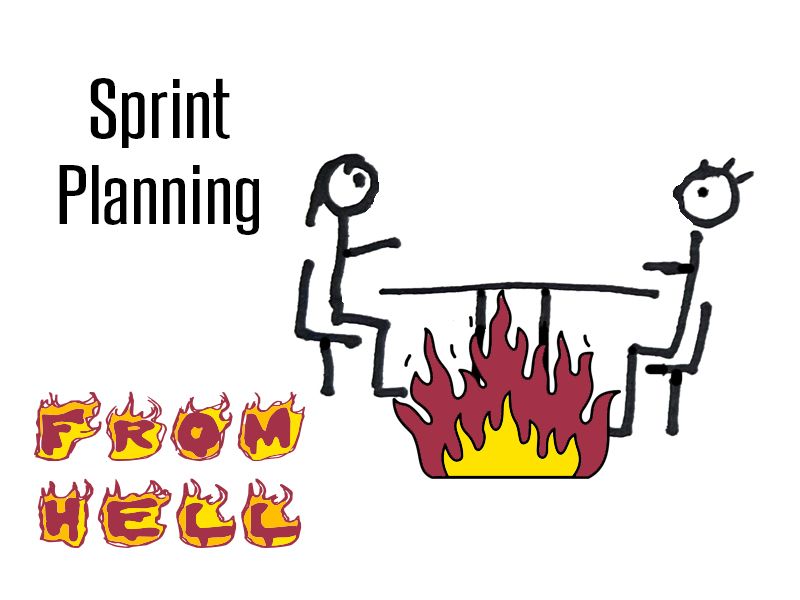

Does your team's Sprint Planning seem like a complete and utter waste of time? Most of the time, the planning isn't the real problem.

Read full article

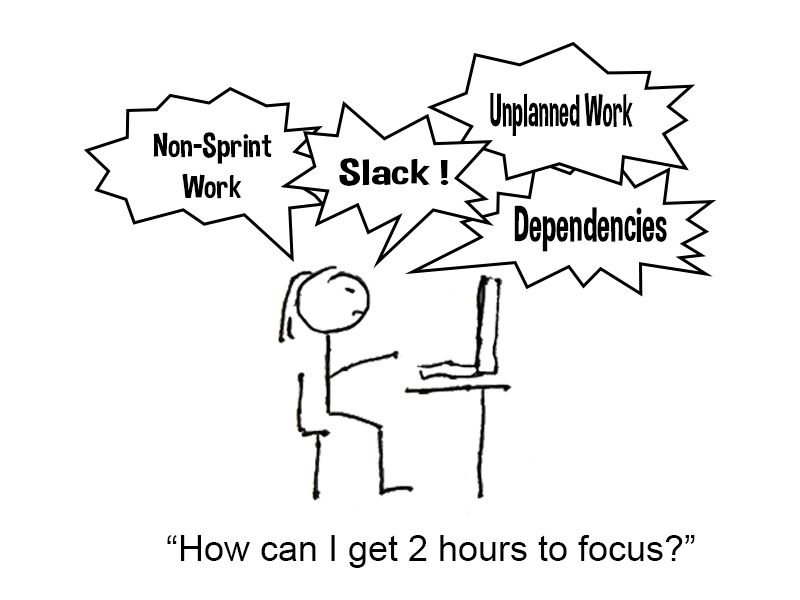

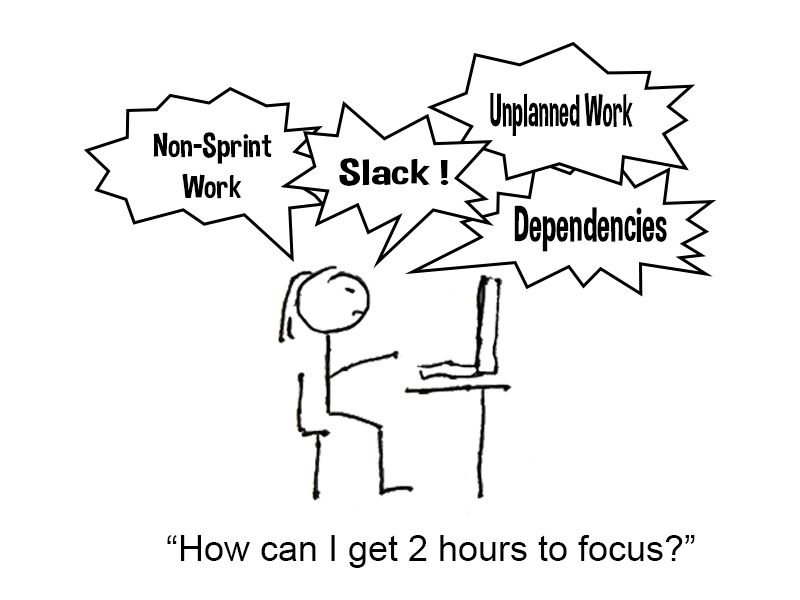

That thing where you’re trying to work but... Surviving interruptions in your team. We look at where they come from and how to eliminate them.

Read full article

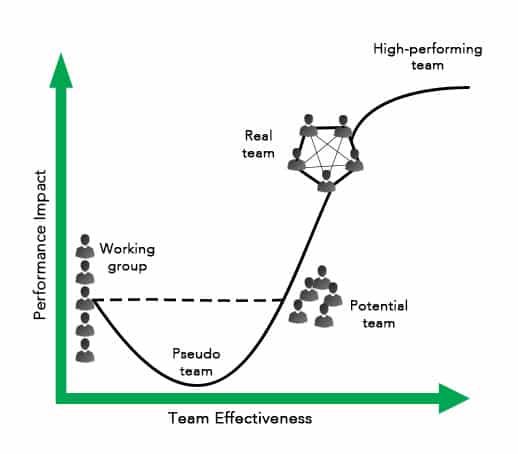

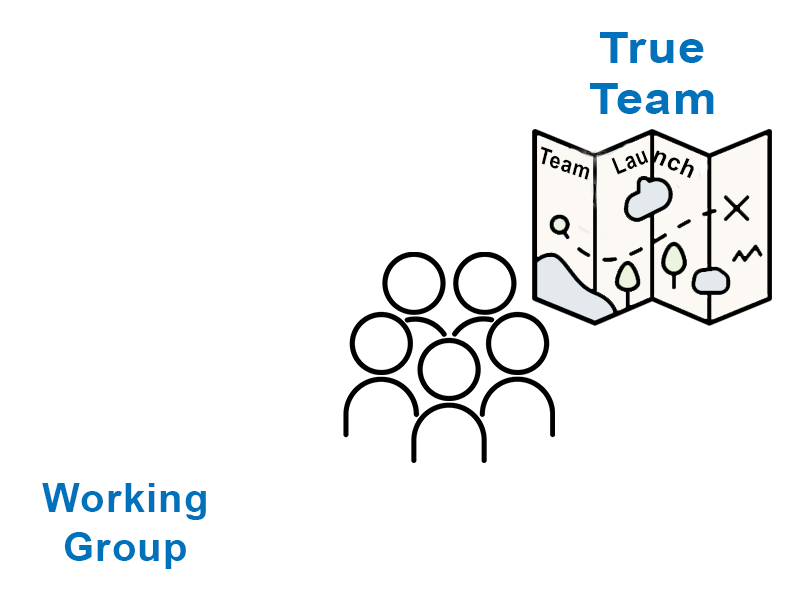

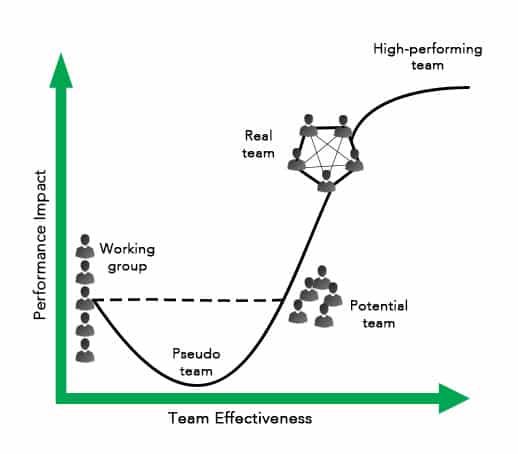

Grouping individuals together doesn’t make them a team. Building High Performance Teams takes time and effort. It is especially important in a world of Generative AI

Read full article

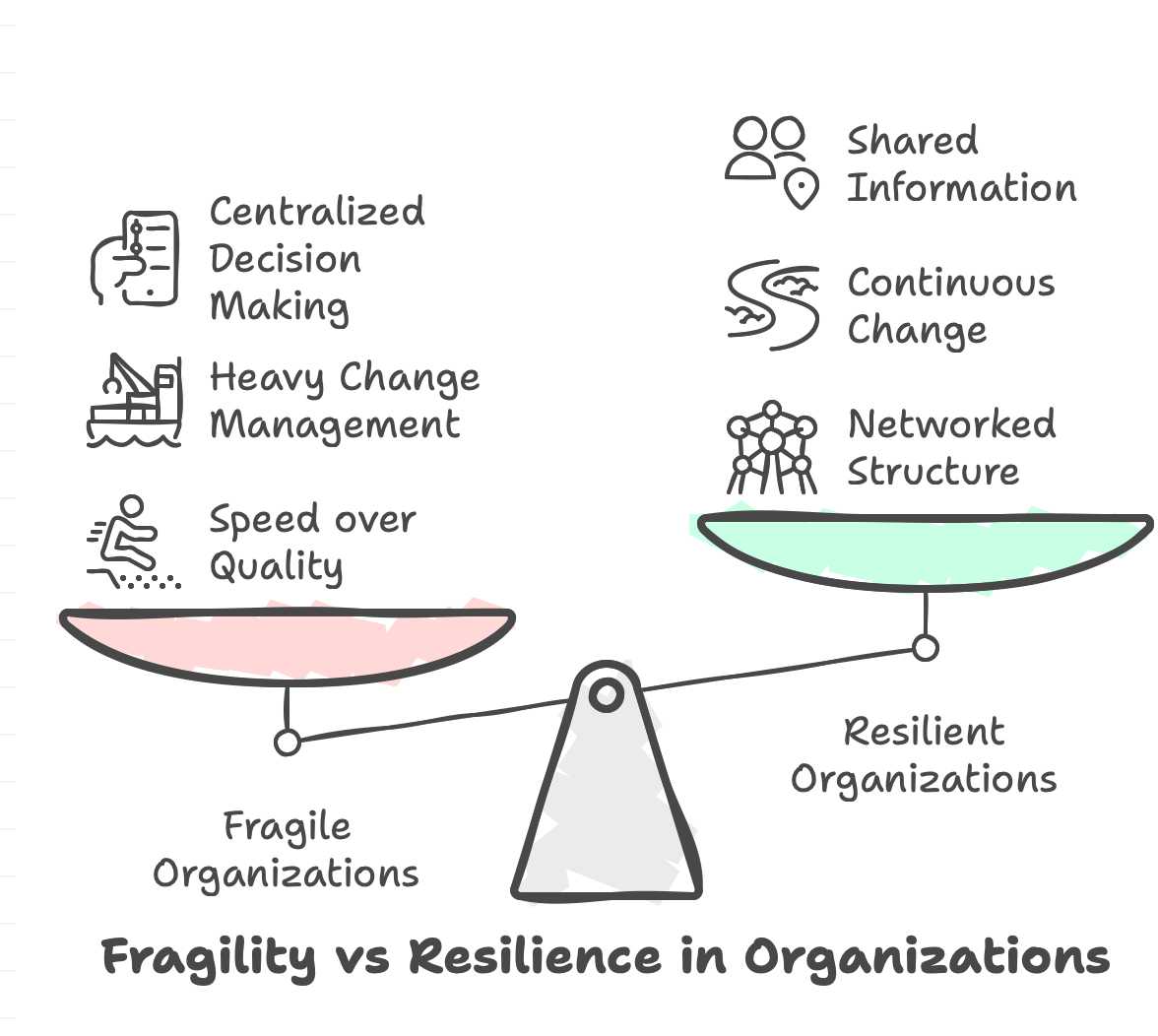

Learn how complexity tax undermines resilience through poor structure, slow decisions, and conflicting metrics. Practical strategies to simplify and strengthen your organization.

Read full article

A fragile organization that goes faster will be knocked over in the slightest wind.

Read full article

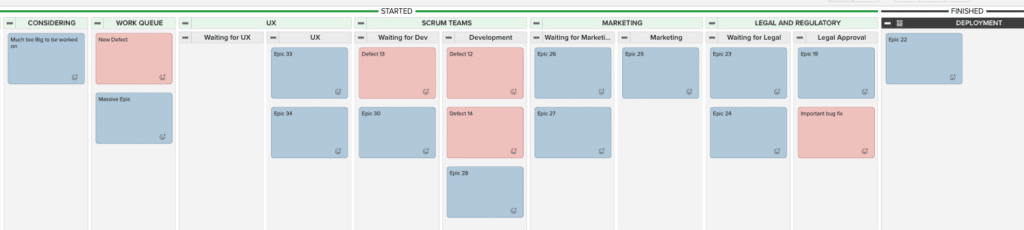

In most organizations, work spends more time waiting to be worked on, than being worked on. To go faster? Look for the queues

Read full article

GenAI makes shipping cheap, not shipping right. Lean UX, Impact Mapping, and real-user experiments matter more when code is disposable.

Read full article

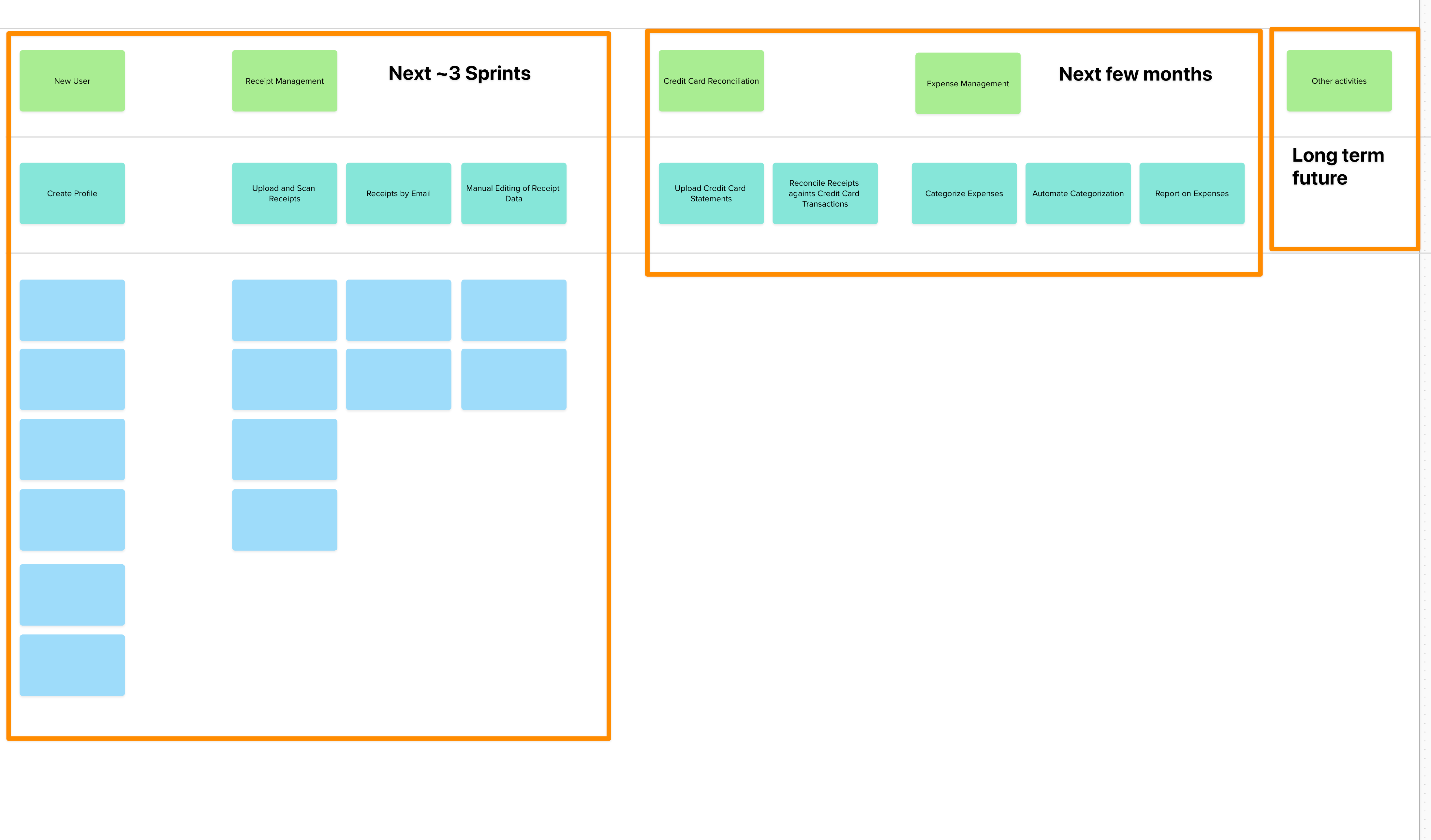

Overwhelmed by your product backlog? Story Mapping can overcome that mess and even buy back a year of your life.

Read full article

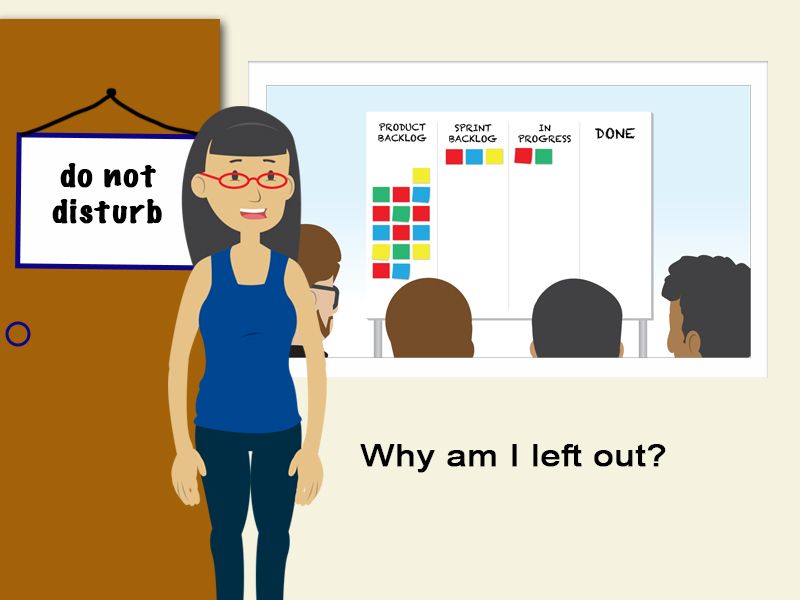

The Scrum Guide says POs aren't required at Daily Scrum, but should they be? Explore why Product Owners should attend to increase team value.

Read full article

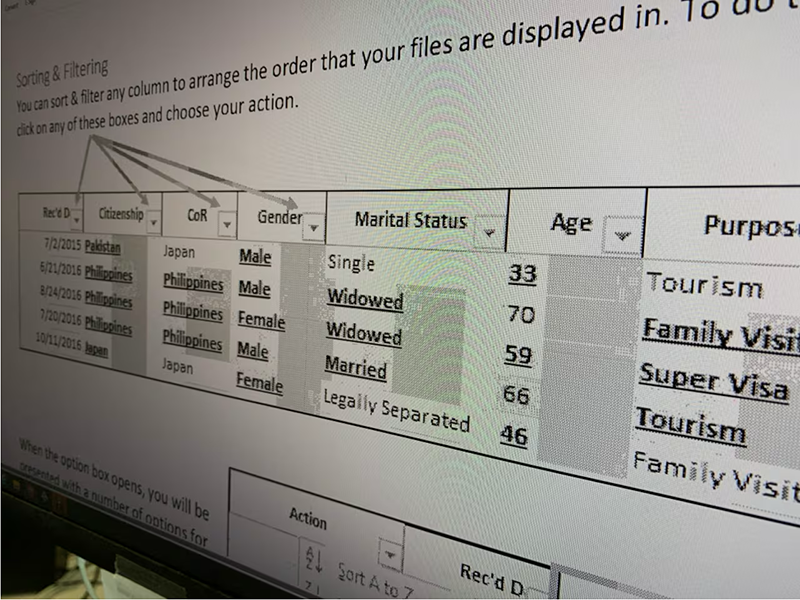

Is IRCC's focus on speed creating unfair visa denials? Explore how mis-measurement clogs the system and hurts applicants.

Read full article

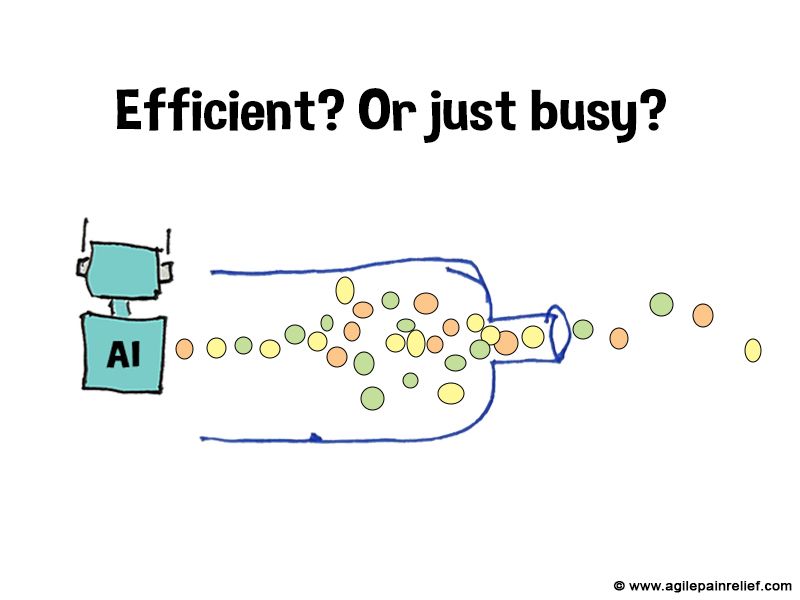

GenAI and PredictiveAI promise greater efficiency, but speeding up workers often makes bottlenecks worse. Focus on these three things instead.

Read full article

Looking beyond GenAI promises to understand real-world impact. Why faster coding might be creating bigger problems in the longer term.

Read full article

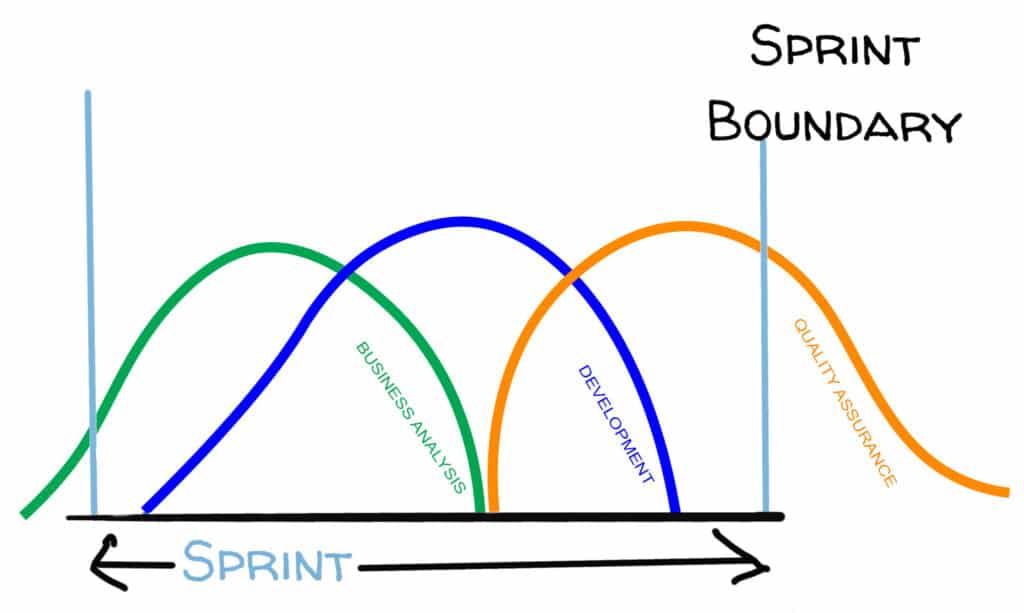

Is your Scrum team stuck in mini-waterfall? Developers racing ahead while QA falls behind is a sign of Scrummerfall. Here's how to break the pattern.

Read full article

Product Backlog Refinement - Learn from my mistakes. From Boring to Valuable, all while taking less time

Read full article

_Scrum team **Working Agreements** are a simple, powerful way of creating explicit

Read full article

Refinement drags on forever? 1hr feels like 3. Fix endless meetings, estimation debates, and unprepared teams using 5-min rules, Complexity Poker, and more

Read full article

Does your team's Sprint Planning seem like a complete and utter waste of time? Most of the time, the planning isn't the real problem.

Read full article

The Scrum Guide says POs aren't required at Daily Scrum, but should they be? Explore why Product Owners should attend to increase team value.

Read full article

That thing where you’re trying to work but... Surviving interruptions in your team. We look at where they come from and how to eliminate them.

Read full article

Learn what it takes to go from Working Group to True Team. Hint, there is hard work involved.

Read full article

Grouping individuals together doesn’t make them a team. Building High Performance Teams takes time and effort. It is especially important in a world of Generative AI

Read full articleExplore what Scrum is and how to make it work for you in our Scrum Certification training. Hands-on learning will guide you to improve teamwork, deliver quick feedback, and achieve better products and results.

Focuses on the role of the team and the ScrumMaster. Get the skills and practical experience necessary to improve teamwork, take the exam, and advance your career with a certification that is in high demand today. Often the best fit for anyone new to Scrum.

Learn on-the-job applications of key Scrum concepts, skills, principles, along with practical solutions that you can apply the next day for difficult, real-life situations.

Everything you need to earn your Scrum Alliance® ScrumMaster certification, including exam fee and membership, and so much more.

With focus on the challenges that real teams face, and tools to dig deeper. You don’t need more boring Scrum theory. You need something you can sink your teeth into to see immediate results.

This workshop is not just for software development or people with a computer science degree. We’ve helped many non-software teams with Scrum.

Use Scrum knowledge to standout at work, get paid more, and impress your customer, all without burning out.

Learn the basics of using Generative AI as a tool to support Systems Thinking in your ScrumMaster role. Explore how to leverage GenAI to help uncover patterns, and think more deeply about the systems your team operates in.

Your learning doesn’t stop when the workshop ends. You get lifetime access to all course materials, plus a followup email series designed to reinforce your learning objectives and help you apply what you’ve learned on the job.