Agile teams have been struggling since the earliest days with how to bring new people on to a team. In one of the first Agile books, there was a story of Alistair Cockburn walking a new hire through the team’s flip charts and telling the story of their work. I haven’t seen a team use […]

Notes from a Tool User

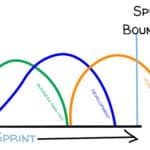

Sprint Goals Provide Purpose

Go Beyond Merely Completing Work Lists with a Sprint Goal Research shows that people, whether acting individually or as a team, achieve more when working toward an objective that is specific, challenging, and concrete.[1] For that, we need clear Sprint Goals. A Sprint should be so much more than just completing a number of User […]

Scrum by Example – Is Your Scrum Team a Victim of Scrummerfall?

Is your Scrum team struggling with questions about who owns quality? Is testing way behind development? Are team members claiming no time for retrospective because they’re focused on delivery? They might be victims of what some call Scrummerfall, or Mini Waterfall. Let’s use our fictional World’s Smallest Online Bookstore (WSOBS) Scrum team to explore what […]

The Modern Guide to the Daily Scrum Meeting

Many people who attend Scrum training courses start with the impression that the Daily Scrum is the whole of Scrum. Others think the Daily Scrum centres on three questions (this was true a long time ago). This guide to the Daily Scrum meeting was created to address these misconceptions as well as answer common questions. […]

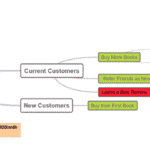

Impact Mapping – What It is, in Depth, with Examples

Work is humming along nicely when your Marketing VP stops by your desk and says, “We need to double monthly sales.” That’s it. That their entire instruction. After you recover from this bombshell, you will need to decide what tool you will use and who you will grab to help you start to tackle this […]

Scrum by Example – Product Backlog Refinement in Action

In Scrum, Product Backlog Refinement is an essential meeting of the Product Owner and the Development Team to gain clarity and a shared understanding of what needs to be done through discussion and sharing of ideas. The following is a guide example of how to run an effective Product Backlog Refinement meeting. We know that many people learn […]

Scrum by Example – Team Friction Inspires Working Agreements

Scrum team Working Agreements are a simple, powerful way of creating explicit guidelines for what kind of work culture you want for your Team. They are a reminder for everyone about how they can commit to respectful behaviour and communication. In this post we’ll see how the fictional World’s Smallest Online Bookstore (WSOBS) Scrum team struggles […]

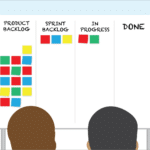

The Sprint Backlog: A Truly Complete Guide with Examples

We might not be able to make Sprint Backlogs exciting, but we can make them more effective. Let’s look at what a Sprint Backlog is, what purpose it serves, and how to create, manage, and improve it. What is a Sprint Backlog? A Sprint Backlog is a list of Product Backlog Items (PBIs) that the Developers think they can complete during the […]